Latest Blog

Deepfake Interviews in Recruiting: How to Detect AI Voice and Video Fraud (2026)

Researcher

•

5 min read

Share this post

Deepfake Interviews in Recruiting: How to Detect AI Voice and Video Fraud (2026)

Remote hiring unlocked speed. It also unlocked a new kind of fraud.

A candidate shows up on Zoom, sounds confident, answers cleanly, and moves quickly through your funnel. You extend an offer. Equipment ships. Then security flags something off: the login location does not match what the candidate claimed, remote access tooling appears, or the person using the device is not the person you interviewed.

This is not a niche edge case. The FBI Internet Crime Complaint Center (IC3) has warned about deepfakes and stolen personally identifiable information (PII) being used to apply for remote roles, including reports of voice spoofing and on-camera audio and lip movement mismatches.

This guide is built for recruiting leaders who want practical detection and prevention, without turning every interview into a hostile interrogation.

TL;DR: the fastest way to reduce deepfake interview risk

If you only do five things, do these:

Treat identity as a continuity problem, not a one-time check.

Add finalist-stage identity verification, including matching ID to the person on camera.

Detect AI-assisted cheating and live coaching, especially in high-stakes roles.

Verify location when it matters, and flag VPN/proxy usage and inconsistencies.

Use layered signals across email, phone, resume, and interview behavior, so fraud has to beat multiple independent gates.

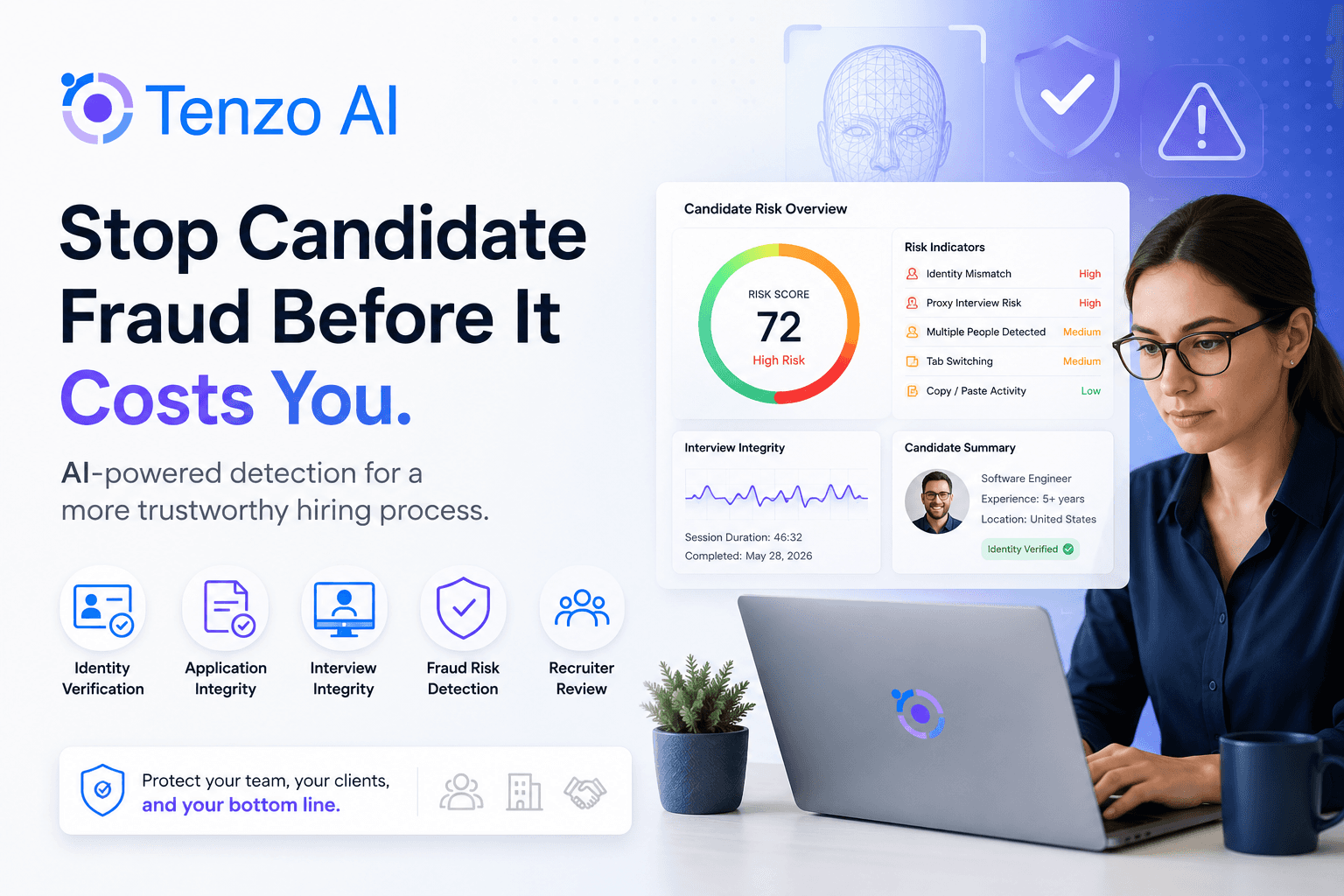

That layered approach is exactly how Tenzo is designed to work in real recruiting operations, not as a bolt-on afterthought.

What is a "deepfake interview"?

A deepfake interview is when an applicant uses synthetic media or deception to misrepresent identity or performance during your hiring process.

In practice, it typically shows up as one (or a combination) of these:

AI voice fraud: voice spoofing, voice conversion, or voice cloning used during phone screens or video calls.

Video interview fraud: face swaps, avatar overlays, or manipulated video used to impersonate a different person.

Stolen or synthetic identity: real PII used to pass background checks under someone else’s name.

Interview cheating: real person, real video, but answers are being generated live (AI copilots) or fed by another person off-camera.

IC3 specifically notes complaints where voice spoofing was used in online interviews and where on-camera lip movement and actions did not align with audio.

Separately, the FBI has warned that North Korean IT worker schemes include face-swapping during video job interviews and operational patterns like reused contact info across resumes.

What deepfake interview fraud looks like in real hiring workflows

Fraud rarely happens as a single "gotcha" moment. It is usually a workflow designed to survive each gate in your funnel.

1) Application stage: scale and repetition

This is where fraudsters try to overwhelm your intake.

Common patterns:

Resumes that are oddly uniform in structure and phrasing across different candidates

Reused phone numbers, especially VOIP numbers, across multiple applicants

Similar email patterns or domains across candidates

Portfolio links that exist but do not match the claimed ownership

IC3 explicitly recommends cross-checking HR systems for other applicants with the same resume content and contact information and reviewing communication accounts, including reused phone numbers and emails.

2) Recruiter screen: AI voice fraud and answer generation

Audio-only screens are high leverage for fraudsters. If they can get past voice, they reach hiring manager time.

What it can look like:

Consistent micro-delay before speaking, especially after interruptions

Unnaturally polished answers that collapse when you ask a specific follow-up

Overly structured responses that sound like they were generated then read

A strong push to keep things async, text-based, or camera-off

IC3 has warned that complaints include voice spoofing or potential voice deepfakes in interviews.

3) Live video: the deepfake moment (or the distraction)

Video fraud can be very subtle. The attacker’s goal is not perfection. It is plausibility.

Reported red flags include:

Lip movement and audio not fully coordinating

Non-speech actions (like coughing or sneezing) not aligning visually

And operationally, schemes may avoid video entirely. NYDFS notes threat actors may decline in-person or video conferences and prefer messaging or phone, while using VPNs to appear U.S.-based.

4) Evaluation stage: cheating and live coaching

Even when the identity is "real," cheating can destroy your signal.

Today’s cheating is not just "Googling." Some tools explicitly market real-time answer feeds based on what they see on your screen and hear in your audio.

What it looks like in interviews:

Great high-level explanations, weak live reasoning

Answers that are polished but shallow under pressure

Eye gaze patterns that suggest reading a second screen

Strange timing, like a short delay then a flawless paragraph

5) Offer and onboarding: where location and device risk show up

This is where many incidents become obvious.

NYDFS describes patterns like:

Requesting devices to ship to an alternative location right before employment starts

Using VPNs to appear local

Downloading remote access protocols to enable overseas access

In the KnowBe4 case widely reported in 2024, a newly hired remote engineer used a stolen U.S. identity, and the organization discovered suspicious activity tied to the workstation after it was received. Reporting also described use of VPNs and an "IT mule laptop farm" style drop location for devices.

Deepfake interview detection signals that matter (voice, video, and workflow)

The goal is not to "spot a deepfake" with certainty in real time. The goal is to catch clusters of inconsistencies and trigger structured verification before you extend trust.

AI voice fraud signals during recruiter screens

Look for patterns that persist across the conversation.

High-signal cues:

Consistent response latency after you interrupt or change topic

Oddly uniform pacing and tone across emotional or complex topics

Background noise that changes abruptly mid-answer

Candidate tries to avoid live conversation and pushes hard for async

Video interview fraud signals during live calls

Do not over-index on a single glitch. Watch for multiple signals.

High-signal cues:

Speech and lip sync drift, especially on fast syllables

Facial artifacts around mouth, jawline, hairline, glasses

Visual warping when the head turns quickly

Lighting inconsistency (face exposure does not match room)

IC3 specifically highlights mismatches between audio and visible lip movement or actions in reported complaints.

Cheating and live assistance signals (AI or a second person)

This is increasingly the dominant problem, even when the identity is legitimate.

High-signal cues:

Candidate answers complex questions too cleanly, but cannot do simple live follow-ups

Candidate struggles with "show your work" tasks, like narrating a decision while editing a doc or debugging

Frequent glances to a consistent off-screen location

A short delay followed by a perfect, essay-like response

Workflow signals that scale across your funnel

These signals are powerful because they are not subjective.

Examples:

Reused phone numbers, especially VOIP numbers

Duplicated resume content across candidates

IP and physical location inconsistencies and VPN/proxy usage

Last-minute address changes or mail forwarding patterns

Layered prevention tactics that actually stop deepfake interview fraud

If you only rely on one check, fraud will route around it. Layered defenses work because they force an attacker to beat independent gates.

1) Make identity continuity a requirement

Identity is not "verified" once. It is maintained.

Do this:

Keep the same contact methods from initial screen to offer

Flag deviations automatically

Cross-check for duplicates in resume content and contact info

IC3 recommends cross-checking HR systems for other applicants with the same resume content and contact information.

2) Add finalist-stage identity verification (ID + camera match)

You do not need bank-level friction for every applicant. You do need high confidence before offers and access.

NYDFS recommends stringent identity verification during hiring, including using more than one government document, confirming physical and IP address locations, and confirming that the pictures from the applicant’s identification documents match the person on camera.

This is where Tenzo can sit naturally in your funnel. Instead of asking recruiters to improvise, Tenzo can standardize an identity verification step where candidates hold up an ID and the system prompts consistent checks, so you get the same evidence and audit trail across candidates.

3) Detect AI-assisted cheating and live coaching

Remote interviews are now "open book" unless you explicitly make them otherwise.

Strong approaches:

Use structured questions and rubrics that reward reasoning, not rehearsed prose

Require at least one live "show your work" step for high-signal roles

Add cheating detection to flag real-time answer feeds and suspicious interaction patterns

Tenzo is built for this reality. It can help detect when candidates appear to be using AI copilots during interviews, and it can flag signals consistent with receiving real-time help from others, so your evaluation remains meaningful.

4) Verify location when it impacts risk and compliance

Not every role needs location verification. Some absolutely do.

NYDFS specifically calls out confirming applicants’ physical and IP address locations and detecting VPN and proxy usage, especially during the interview process.

IC3 also notes that North Korean IT worker schemes can include obfuscating true identities and operational patterns that show up during hiring and onboarding.

Tenzo can support location verification as a targeted control. This is most useful for roles with system access, customer data exposure, or regulatory requirements where location and identity assurance matter.

5) Tighten device and access controls after hire

Assume some fraud will slip through, then design your onboarding to limit blast radius.

NYDFS recommends controls like tracking and geolocating corporate laptops and flagging address changes, suspicious IP locations, unusual network traffic, and unapproved remote access tools.

This is the recruiting-to-security handshake many companies lack. Your hiring stack should not end at "offer accepted." It should feed structured risk signals to onboarding and IT so access ramps safely.

A copy/paste deepfake interview detection checklist for recruiting teams

Use this as a lightweight SOP. It is designed to be fair, consistent, and evidence-based.

Before the interview

Check for duplicate resumes or repeated phrasing across applicants

Check for reused phone numbers and disposable-looking emails

Confirm the interview format (camera-on for live video stages)

Decide whether this role needs finalist identity and location verification

During the interview

Run one live reasoning check

"Walk me through how you would solve X, and narrate your decision making as you go."

Watch for clusters of signals

Audio-video mismatch or strange delays

Unnatural reading patterns

Avoidance of basic verification steps

After the interview

Document the specific signals observed (what happened, when it happened)

Escalate to a standardized verification step if any high-risk cluster appears

For finalists, run identity verification and confirm camera match to ID

For higher-risk roles, verify location and flag VPN/proxy inconsistencies

How Tenzo fits into a modern anti-fraud recruiting workflow

Most ATS and interview tools were built for throughput. They assume the interview is a trustworthy measure of identity and capability.

Tenzo is designed for the world you are hiring in now.

Tenzo can be woven into your funnel as a layered defense system that helps you:

Verify identity with a structured ID hold-up step, confirming the candidate is who they claim to be

Detect cheating when candidates attempt to use real-time AI answer tools during interviews

Detect live assistance patterns consistent with someone else feeding answers

Verify location when it matters for risk, compliance, or customer requirements

Use dozens of additional signals across email, phone, resume, and workflow behavior to catch repeat patterns and inconsistencies

You do not need to "add friction everywhere." You need the ability to turn on stronger controls when risk is higher, when anomalies appear, or when you are about to extend an offer.

If you want to see what that looks like in a real recruiting workflow, you can book a demo or consultation with Tenzo.

FAQ: deepfake interview fraud, AI voice fraud, and video interview fraud

How common are deepfake interviews in recruiting?

Enough that U.S. law enforcement has issued public warnings. IC3 has warned about increases in complaints involving deepfakes and stolen PII used to apply for remote roles, including voice spoofing and audio-video mismatches.

Are North Korean remote worker schemes relevant to recruiting teams?

Yes, because the hiring process is a primary entry point. IC3 has warned that North Korean IT workers have used face-swapping in video job interviews and recommends identity verification and cross-checking for reused resume content and contact information.

What is the single highest ROI prevention step?

Finalist-stage identity verification, combined with continuity across the funnel. NYDFS specifically recommends matching ID photos to the person on camera and confirming physical and IP locations, especially during the interview process.

How do we reduce fraud without discriminating or creating bias?

Anchor your process on objective signals and consistent steps. Avoid subjective judgments about appearance, accent, or background. Use documented anomalies, repeat patterns, and standardized verification steps.